All articles

Agentic Commerce Is Driving Discovery While Conversion Still Depends on the Last Mile

Oliver Bradley, Chief Digital Officer at Neem, is helping brands optimize product visuals for AI-driven shopping environments.

Agentic will get people to the page, but people still have to look at the hero image and the content and decide, is that what I want? The basics still matter.

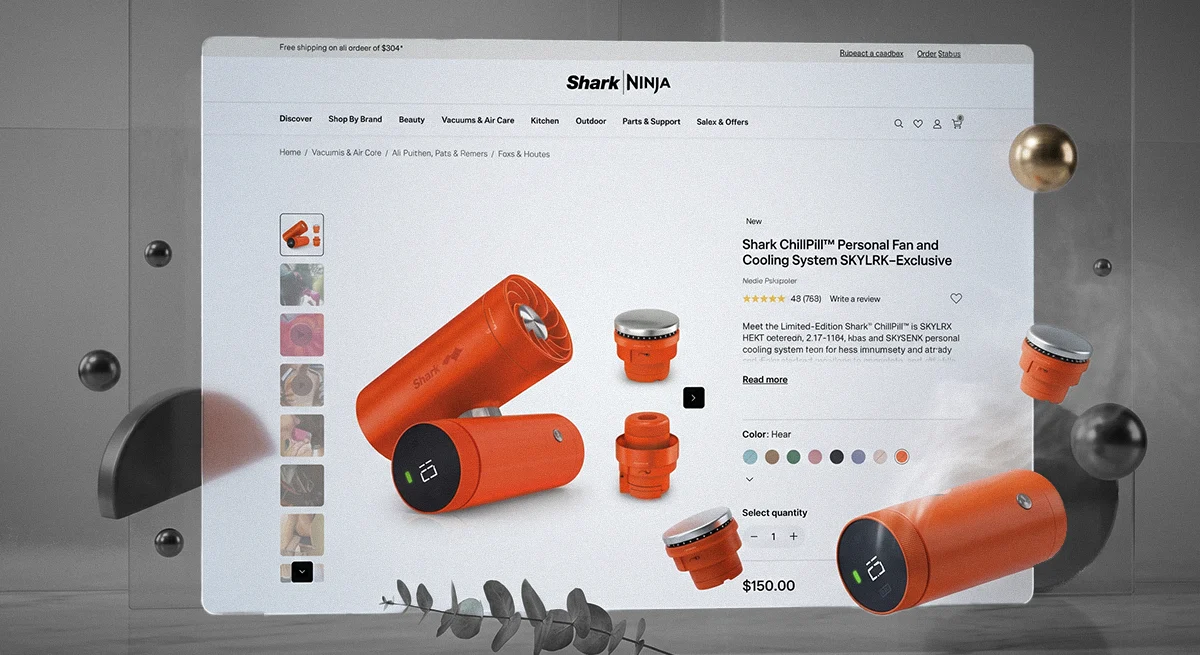

CMOs are investing heavily in agentic commerce, but many are missing the moment that actually converts. Retailers are pouring millions into AI shopping assistants designed to help customers find and compare products. As large language models move from experimentation into live deployment, these tools are getting better at summarizing product information and streamlining decision-making. But that same compression comes with tradeoffs. As titles are shortened and descriptions distilled, key details like size, count, and weight often disappear on mobile screens. The result is a quiet shift in how shoppers make decisions: the more text gets optimized, the more the product image becomes the final source of truth.

Oliver Bradley, Chief Digital Officer at Neem, was studying this exact UI challenge long before AI shopping assistants became a priority. In 2011, while at Unilever, he ran eye-tracking studies on 60 online shoppers using a $6,000 budget and a borrowed laptop. The findings were clear: shoppers largely ignored text, focusing instead on the product image and the add-to-basket button. Bradley later partnered with University of Cambridge’s inclusive design team to adapt its exclusion calculator for digital product imagery, measuring how many shoppers could actually read key details at thumbnail size. The approach produced measurable sales lift and led to his role chairing a GS1 working group that developed the open-IP global standard for Mobile Ready Hero Images. Today, he leads development of Rhino, an AI tool designed to automate clarity and contrast testing at scale.

"Agentic will get people to the page, but people still have to look at the hero image and the content and decide, is that what I want? The basics still matter. It's like spending a lot of money on retail media and pushing people to a page that's not optimized," says Bradley. He points to a recent example to illustrate the stakes, noting that some brands are spending big to secure the top spot in Amazon Rufus. The investment reflects how quickly teams are prioritizing AI-driven discovery, but it also highlights a growing disconnect. Agentic tools can surface products and streamline comparison, but they don’t change how shoppers make the final decision. When strong discovery layers sit on top of weak product imagery, the drop-off is immediate. "They'll scroll past you and you're done. You could pay 100 grand to be at the top of Rufus, but without optimizing your hero images, you're wasting money," he notes.

The desktop dilemma

The gap often starts with how teams are structured. E-commerce typically sits within sales organizations that are still optimized for physical retail, where packaging and in-store materials take priority. That orientation carries through into the creative process, which is rarely built around how people actually shop on mobile. Review workflows reinforce the disconnect. Creative is designed and approved on large desktop screens, often under ideal viewing conditions that do not reflect real-world use.

To bridge that gap, Bradley points to the need for objective validation tools such as the Cambridge Exclusion Calculator to quantify whether key information is legible at mobile scale. "The senior team looks at everything on a desktop. The creative team creates everything on 6K 32-inch Mac monitors, and the brand manager approves it on their 14-inch corporate-issue laptop," Bradley explains. "The entire approval workflow sits on a laptop, and the only person who sees it on a phone is the shopper."

Designing for real eyes

Simple demographic realities make this gap harder to ignore. The average age in the U.S. and Europe is now over 40, a point at which eyesight typically begins to change. Yet many creative workflows still reflect ideal viewing conditions, shaped by younger teams designing on high-resolution screens. This disconnect shows up quickly when assets move to mobile. "Brands are obsessed with just Gen Alpha and Gen Z, but you're limiting yourself to those cohorts," Bradley notes. "Why don't you think about extending your penetration by designing for everybody's eyesight ability?"

That same mismatch carries into how performance is measured and optimized. Bradley points to the Cambridge Inclusive Design Toolkit and emerging accessibility standards as a way to ground decisions in real-world visibility. Rhino’s testing framework draws on WCAG 3.0 APCA contrast guidance, targeting at least LC45 for body text, as well as the European Accessibility Act requirement for a minimum text size of 2.4 millimeters. On a phone, key information must remain legible within a 16 to 20 millimeter thumbnail, with enough contrast to hold up under real conditions. "Consumers are multitasking," says Bradley. "One of their screens is in focus and one of them isn't. Why aren't we designing for real life?"

Won in the thumbnail

The commerce ecosystem is steadily tilting toward conversational experiences. Shoppers are beginning to ask chatbots to find products, and retailers are testing instant checkout flows inside AI assistants. A focus on small, visual details feels counterintuitive, but it's precisely those details that matter when AI takes over the text. This reality plays out daily on Amazon, where the Rufus assistant changes how search results are displayed. In Bradley’s testing, Rufus and similar interfaces often shorten titles in the grid view, meaning key details simply do not appear in the text.

By redesigning a pack shot to emphasize brand, format, size, and count within the small mobile tile, and by testing clarity and contrast against mobile screen parameters, Bradley consistently sees incremental sales growth. "Just taking away that tiny little bit of friction makes a massive difference. I promise my clients I'll get them at least 5% uplift. With some of my clients, I've got them 25% uplift. With one client, we've got 4.485% uplift on Amazon just from changing the hero image."

For a quick diagnostic, Bradley suggests opening a top SKU on a phone and check whether brand, format, variant, and size are clear at a glance. These seemingly incremental optimizations scale quickly on platforms where discovery and conversion happen in the same moment. In that environment, two signals consistently drive decisions. Ratings and reviews shape how products are surfaced and summarized, while the hero image determines whether a shopper engages. "Shoppers are going to look at the number of stars and the image. These are the two critical things," Bradley concludes. "If you're a CMO, you need to have a good amount of reviews because that's what drives Rufus. And you really need to fix your hero image. Those are the two killer moves right now."